In Part 1 of this series, I examined why India's sovereign AI narrative risks conflating national origin with product-market fit. But the sovereignty argument has a problem that goes beyond market dynamics. It has an evidence problem.

India's AI Impact Summit last week produced a parade of announcements about sovereign artificial intelligence. Sarvam AI launched a 105-billion parameter model trained from scratch on Indian infrastructure. The government of Odisha committed to a sovereign AI compute hub. IIT Madras broke ground on what it calls India's first sovereign AI research park. Union ministers spoke of technological self-reliance. The word "sovereign" appeared in nearly every keynote.

There is a structural contradiction in this framing. India's most sensitive citizen-facing digital platforms, the systems handling biometric data, vaccination records, identity documents and government procurement, already run on American cloud infrastructure. In practice, government procurement decisions have already answered part of this debate. And the government itself answered it.

The Infrastructure India Actually Chose

CoWIN, the vaccination platform that administered 25 million doses in a single day at the peak of India's Covid-19 campaign, was hosted on Amazon Web Services. It was not a passive default. The government actively chose AWS over the National Informatics Centre's own MeghRaj cloud, the sovereign option that was purpose-built for government workloads. Scale and reliability won over origin.

That choice was not an exception. It was a pattern. DigiYatra, the facial biometric boarding system now processing travellers at airports across the country, runs entirely on AWS using Cognito for identity management and Lambda for credential verification. The Government e-Marketplace, India's national procurement portal handling thousands of crores in public spending, runs on AWS. UMANG, the unified governance app consolidating 1,700 services from 300 departments for over 50 million users, is AWS-powered. DigiLocker, the digital document platform used by over 300 million citizens, sits on the same infrastructure. Even Aadhaar-linked data is not immune: the 2025 UIDAI circular mandates that Aadhaar Data Vaults be hosted only on MeitY-empanelled cloud environments, and both AWS and Microsoft Azure hold that empanelment.

This is not a marginal dependency. It is the foundational layer of India's digital public infrastructure. The platforms that define India's global reputation for technology-enabled governance—the systems that other developing nations study and attempt to replicate—run on servers owned by companies headquartered in Seattle and Redmond.

The contradiction extends to the summit itself. The AI Impact Summit website, impact.indiaai.gov.in, is served through Amazon CloudFront, AWS's content delivery network. The Impact Expo site stores every asset, from speaker headshots to venue maps and advisory PDFs, in an AWS S3 bucket in Mumbai designated 'aiexpo.s3.ap-south-1.amazonaws.com'. Even the AI Responsibility Pledge platform, the one that processed 250,000 pledges for a Guinness World Record during the summit, runs on Fastly, an American CDN. The summit’s digital delivery stack relied heavily on U.S.-based cloud and edge providers.

Frontier Models on Indian Soil

Now layer frontier AI models on top of that infrastructure. Azure OpenAI, including GPT, is generally available in Microsoft's South India and Central India regions. Google's Vertex AI serves Gemini models from data centres in Mumbai and Delhi. Amazon Bedrock, which provides access to Anthropic's Claude and Meta's Llama among others, has been generally available in the Mumbai region since May 2024. Google has gone further with Distributed Cloud, offering Gemini on air-gapped on-premises deployments for governments with the strictest data residency requirements; Singapore’s GovTech is an early adopter.

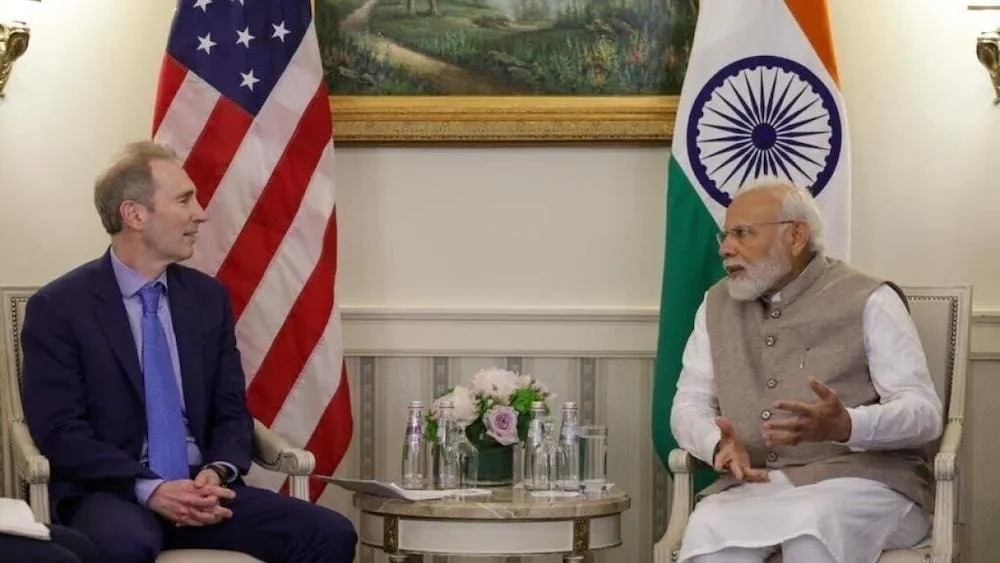

At the summit itself, where sovereign AI was the rallying cry, the biggest commitments came from the companies whose products already underpin the infrastructure. Sundar Pichai announced a $15 billion AI infrastructure investment including a full-stack hub in Visakhapatnam with gigawatt-scale compute capacity. Google Cloud committed to building an AI platform for 20 million public servants across 800 districts in 18 languages. OpenAI announced it would be the first customer of Tata Consultancy Services’ data centre business.

If data residency were the definition of sovereignty, GPT running in an Azure data centre in Pune, Gemini served from a Google Cloud node in Mumbai, and Claude accessible through Amazon Bedrock in the same region already meet the threshold. The question is no longer whether India’s AI will depend on foreign technology. It already does. The question is what kind of dependency India is willing to accept and what it builds on top of it.

As the Mozilla Foundation put it in a statement timed to the summit, "a state concerned with AI sovereignty in 2026 cannot credibly justify financing a foreign, vertically integrated AI stack while neglecting investment in domestic and open-source alternatives." This is not a hypothetical risk. Sam Altman revealed at the summit that ChatGPT has more than 100 million weekly active users in India. Anthropic announced it is opening its first India office in Bengaluru, calling India its second-largest market. These companies are not planning to enter India. They are already embedded in Indian developer workflows.

Sovereignty Has Layers

The summit rhetoric treated sovereignty as a single, binary condition: either India's AI is sovereign or it is not. In practice, sovereignty operates across at least five distinct layers, and India's position varies dramatically across them.

- Data sovereignty, where data is stored and under what legal framework, is where India has made genuine progress. The Digital Personal Data Protection Act and MeitY empanelment standards create meaningful guardrails even when the underlying infrastructure is foreign-owned.

- Infrastructure sovereignty, control over cloud, compute and networking, is where the evidence above is most damning.

- Model sovereignty, ownership of weights, training data, alignment and evaluations, remains aspirational: Sarvam's 105B model lacks published weights, technical reports or system cards.

- Application sovereignty, who owns user relationships and workflows, is arguably India's strongest position, with Indian startups building on global models for domestic markets.

- Standards sovereignty, who defines benchmarks and evaluation frameworks, is where India is almost entirely absent; without community-governed Indic evaluation suites, India's models will continue to be measured by standards set in San Francisco and Beijing.

Public rhetoric that treats all five layers as interchangeable leads to policy confusion and misallocated capital. Precision about which layer is being discussed would sharpen both strategy and investment.

What Policy Should Fund Next

The Carnegie Endowment analysis published earlier this year argued that India's AI strategy has focused almost entirely on compute infrastructure and Indic language models while neglecting data quality, talent and research depth. The infrastructure dependency documented above confirms that diagnosis. Policy needs to move beyond GPU procurement and towards instruments that address specific sovereignty layers.

The most immediate gap is data. The government should fund shared public datasets for Indic languages and government workflows, giving both domestic and foreign model builders the raw material to serve Indian users better. High-quality, curated training data is a sovereign asset that does not depend on building a domestic hyperscaler. Alongside this, India needs benchmark suites for production tasks, not just academic tests, so that procurement decisions can be based on verified performance rather than summit claims. Open model evaluation standards, including mandatory technical reports, system cards and published evaluation results, should be a condition for any model receiving public funding or official endorsement.

The third is talent retention. Grants for applied AI labs in Indian universities would help retain the deployment expertise that currently leaves for Google, Meta and OpenAI.

The fourth is infrastructure diversification. Domestic cloud reliability standards and migration targets would create a credible pathway for reducing hyperscaler dependency over time, without the disruption of abrupt mandates. Multi-vendor procurement rules would prevent the single-cloud lock-in that the current DPI stack has drifted into. And incentives for open-weight model releases would accelerate ecosystem development by ensuring that publicly funded AI is publicly auditable.

India's entire IndiaAI Mission budget is approximately $1.1 billion. OpenAI alone has raised more than $18 billion. Given that asymmetry, precision in how public funds are allocated matters more than the total figure.

Where the Opportunity Still Is

The dependency is real, but so is the opportunity. In the emerging AI stack, value does not accrue only to model builders or hyperscalers. The investable gaps sit between infrastructure and end users: evaluation tooling that can verify model performance on Indian languages in production; local data pipelines that curate the training and fine-tuning datasets India's ecosystem needs; vertical AI applications in healthcare, agriculture and government service delivery where domain knowledge matters more than model scale; multilingual voice platforms that bridge the gap between English-first frontier models and India's linguistic reality; and the enterprise deployment stack that helps Indian companies move from experimentation to production.

For investors, the question is not which model wins. It is who owns the translation layer between global AI capability and Indian market reality. The companies that capture these positions will benefit regardless of whether the underlying model is Indian, American or Chinese.

The Template Already Exists

India has done this before. The Unified Payments Interface did not succeed because it was Indian. It succeeded because it was technically elegant, radically open and solved a real problem at population scale. Today, UPI processes over 16 billion transactions per month; is being adopted by countries from Singapore to France; has become the global reference architecture for real-time payments.

Nobody calls UPI sovereign payments infrastructure. They call it the best payments infrastructure. That distinction is exactly the shift India's AI ecosystem needs to make. The country has the structural advantages to get there: 1.4 billion potential users; a massive developer community; a talent base that the world's largest AI companies are actively competing for. The question is whether the ecosystem has the patience; capital; honesty to pursue global competitiveness rather than settling for headline visibility at government summits while the platforms that actually serve Indian citizens continue to run on Amazon Web Services.