In a courtroom in Los Angeles, a young woman's life, her initials, K.G.M., was reduced to evidence. Hours spent scrolling. Nights without sleep. A mind drawn, again and again, toward a glowing screen that never asked her to stop.

Meta and YouTube were found liable for negligence in protecting a minor, with damages awarded for the psychological toll of systems that plaintiffs argued were engineered to be addictive and exploit their developmental vulnerabilities.

The jury did not deliberate on a single post, a single video, or a single act of neglect. It considered something more unsettling. The possibility that harm was not incidental to these platforms, but intrinsic to their design.

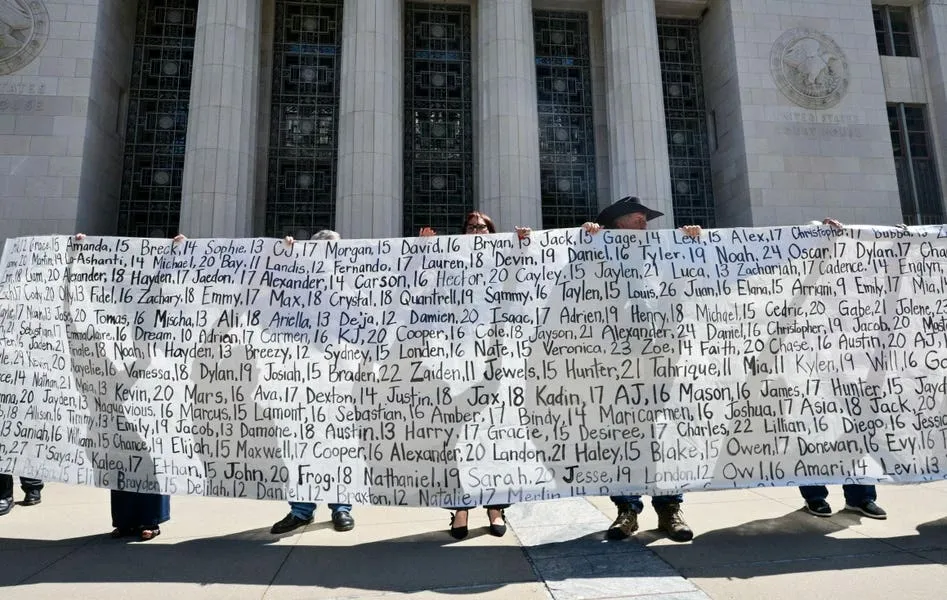

The case is one among thousands including families and school districts, are pursuing coordinated actions against Meta, YouTube, TikTok, and other platforms now winding through courts across the United States, brought by families, school districts, and states.

Together, they advance a claim that would have seemed improbable a decade ago.

That social media platforms may be less like neutral conduits of expression and more like constructed environments that shape, and at times deform, the lives of their users.

A generation increasingly marked by emotional strain and by the quiet normalization of distress is described, along with the institutional burden that follows.

Internal documents cited in litigation suggest that some companies were aware of the risks their platforms posed to young users, including their effects on emotional development and psychological stability.

The claim is not about negligence at the margins. It is about intentionality at the core.

The analogy to Big Tobacco has been invoked so often that it risks cliché. Yet it persists because it captures something essential. Not the product, but the logic. A system that depends on sustained consumption, that refines its methods through behavioral insight, and that confronts, sooner or later, the question of whether its success is compatible with human well being.

The exposure of an architecture

The architecture is subtle. It does not impose itself as force. It presents itself as invitation. A feed that never ends. A video that begins before the last one has finished. An autoplay that eliminates decision points. An infinite scroll that ensures that there is always a next. A notification that arrives not when something urgent occurs, but when engagement begins to fade, creating intermittent reinforcement cycles.

Each element appears trivial.

Together, they form a system that removes friction from consumption and inserts friction into disengagement. They are a coherent architecture of engagement turned into an instrument of capture, tailored to the user's history, their preferences, their emotional fault lines. They are built on mechanisms that behavioral scientists have long understood as the power of variable rewards.

The uncertainty of what comes next is itself the stimulus. The system's intelligence is designed to deliver repeated dopamine rewards that encourages users to chase the next piece of content without natural stopping cues, at the expense of its own.

The user is not coerced. That's worse. The user is unconsciously guided. One may argue that the formal ability to exit remains. But what diminishes is the practical ability to do so. The same structure that underpins gambling addiction. A slot machine, translated into code.

Teenagers and even adults scrolling late into the night do not experience this as loss of agency. They experience it as desire. This is the brilliance of the system. It does not confront the will. It shapes it. It cultivates a Technological Stockholm Syndrome.

In the environments described in court, human capacity is eroded. Slowly, persistently, through the introduction of compulsive loops.

Internal research, reported over the past several years, has suggested that these mechanisms can have measurable effects on mental health, particularly among adolescents.

Anxiety, depression, compulsive patterns of use, and body dysmorphia particularly among young users whose identities are still forming, have been associated with prolonged exposure to these environments. Courts are now being asked to decide whether such outcomes are foreseeable consequences of design choices.

Vulnerability becomes the vector of optimization. The result is not neutral connection but intensified comparison, competition, and emotional volatility.

Such platforms architecture not only govern what users see, but how they see each other. Metrics of engagement transform social interaction into quantifiable signals. Likes become the unit of validation. Shares become a measure of relevance. Notifications become a proxy for presence. The absence of any of them becomes a signal of exclusion. Visibility becomes a condition for existence. Identity is performed and evaluated in real time.

The system promises to connect people, but then holds that very connection hostage through a form of conditioning for its own exclusive profit. What is optimized is relational extraction and weaponization.

The courtroom translates these experiences into claims of harm. But the underlying phenomenon is broader. On the surface, it appears that attention is what is being degraded. More profoundly, it is the social fabric itself. It is a colonization of the self.

When millions of users are exposed to the same design logic, individual outcomes aggregate into societal patterns.

This is where the language of failure becomes inadequate. The systems are not malfunctioning. They are performing precisely as intended.

That systems can be harmful not because they fail, but because they succeed.

The question, then, is not whether they work. It is what they work for.

Meanwhile, the economic model remains consistent.

Revenue is tied to cognitive capture as a byproduct.

Artificial Integrity is a must-have

There is, in all of this, a paradox.

The systems at issue represent some of the most sophisticated applications and are, in a technical sense, remarkably advanced forms of artificial intelligence.

And yet, in their interaction with human beings, they reveal a deficit that intelligence alone cannot resolve.

They lack integrity as a structural property.

A capacity to align their operation with the preservation of human agency, the authenticity of relationships, and the stability of the societies in which they are embedded.

Artificial Integrity names this missing dimension.

It is a design principle requiring systems intended to interact with human cognition to protect the sovereignty of the mind, including the capacity to choose, to attend, and to disengage. In other words, cognitive agency.

It would require intelligent systems that respect the rhythms of attention, that reintroduce moments of reflection, that recognize vulnerability not as an opportunity for capture but as a condition for protection. The "Inner."

It would demand that intelligent systems support meaningful, non-exploitative interactions; interdependence; intersubjective recognition; interpersonal trust; interconnection; interlocution; intercaring. The "Inter."

It would call for values alignment; one in which success is measured by contribution to human/societal well-being; one adaptive to context/capable of moral judgment even when norms are blurry/incomplete/conflicted. The "Outer."

Absent Artificial Integrity, intelligent systems will continue to do what they have been built to do: extract the self for profit; spelling out the alphabet of an ecosystem's facebook that seeks to minimize resistance and maximize endurance at life's expense; and in doing so; driving the institutionalization of mental dispossession.

This is why traditional frameworks of responsibility struggle to keep pace. Consent assumes clarity. Choice assumes neutrality. Both are destabilized when environments are engineered to influence behavior at a subconscious level.

The court is beginning to adapt. It's about time.

If design can be harmful, then design can be accountable.

It does not oppose technology. It shifts the question from what systems could do to what they should do. This reframing is critical. It moves the debate away from more moderation and toward core system architecture.

If successful, it opens the door to a new form of stewardship, one in which systems are governed not only by what they allow but by how they are built.

The courtroom in Los Angeles offers no final answer to these questions. Appeals will follow. The law will evolve. But something has changed.

The verdict against Meta and YouTube, whatever its final legal outcome, marks a turning point.

For the first time, a jury has been asked to consider whether the harm experienced by a young user was not the result of misuse, but of design. Whether the intrinsic functioning of the system itself crossed a threshold.

If that question continues to be asked, and answered, the consequences will extend far beyond a single verdict. They will reach into the foundations of how artificial intelligent systems are built and judged.

And they will make clear what this moment already suggests.

That the absence of Artificial Integrity is no longer a side concern.

It is a condition with life-altering consequences.

And that in systems designed to shape the human mind, Artificial Integrity is not optional.